Code Is Cheap. Trust Is Expensive

The real bottleneck in AI-assisted Java development is no longer producing code. It is understanding it, verifying it, and deciding whether it deserves to be trusted.

For most of software history, teams lived with a simple scarcity model: writing code was expensive.

You needed time, attention, and experienced engineers to turn an idea into a working implementation. The cost of production shaped everything else. Planning mattered because typing, wiring, testing, and integrating all took real effort.

That model is gone.

Today, a decent AI assistant can generate a Spring controller, DTOs, validation annotations, tests, and a service stub in seconds. Boilerplate is no longer the bottleneck. First drafts are cheap, and the local feeling of speed is real.

But that does not mean software has gotten cheap.

It means the economics have changed.

The expensive part is no longer producing code. The expensive part is deciding whether that code deserves to exist in your system.

That is the shift many teams still have not fully absorbed.

AI did not eliminate engineering cost. It moved it.

The New Bottleneck

AI changes the shape of engineering work. It reduces the cost of generating syntax, but it does not remove the cost of understanding behavior, validating assumptions, or owning production outcomes.

That distinction matters.

If an AI tool writes a REST endpoint for you in thirty seconds, you still have to answer harder questions:

Does it actually implement the business rule correctly?

Did it invent framework behavior that does not exist?

Did it quietly skip validation or error handling?

Is the code readable enough that someone else can maintain it?

What happens when this logic reaches concurrency, load, or real users?

This is the trap many teams fall into early. They measure typing speed and call it productivity.

But engineering was never just typing. The real work has always included judgment, tradeoffs, verification, and accountability.

AI makes that more obvious, not less.

Why Java Is a Particularly Interesting Case

Java is one of the better ecosystems for learning how to work with AI productively because it pushes back.

The compiler pushes back. The type system pushes back. Tests push back. Spring conventions push back. Build tooling pushes back.

That is useful.

In weaker feedback environments, generated code can look plausible for a long time before anyone realizes it is wrong. In Java, bad assumptions often surface quickly. A hallucinated API, wrong annotation, missing bean, or invalid generic signature tends to fail fast.

That makes Java a good language for AI-assisted development, but for a very specific reason: it helps engineers calibrate trust.

AI often performs well on:

repetitive CRUD scaffolding

DTO and mapper generation

basic controller and service structure

repetitive test setup

documentation drafts

AI is much less reliable when the task depends on:

ambiguous business rules

unusual framework interactions

concurrency behavior

transaction boundaries

performance sensitivity

architecture tradeoffs

This is not an abstract complaint about “AI limitations.” It is a practical distinction between pattern matching and engineering judgment.

Faster Is Not the Same as Better

The most dangerous AI failure mode in software is not nonsense output. Engineers usually catch obvious nonsense.

The dangerous failure mode is plausible output.

Plausible code looks clean enough to merge. It compiles. It might even pass a shallow test. It feels done before it is actually trustworthy.

That creates a new kind of cost:

more review effort

more hidden assumptions

more maintenance burden

more rework later in the lifecycle

In other words, AI can reduce initial implementation time while increasing downstream cost.

This is why “the AI made me faster” is not yet a complete statement. Faster at what? Faster to first draft? Faster to merge? Faster to trustworthy software? Faster to production incidents?

Those are different questions.

The Right Habit: Measure, Don’t Generalize

A lot of AI conversations drift into ideology very quickly.

One engineer says, “AI is amazing, I built this endpoint in minutes.”

Another says, “AI is overhyped, I spent longer fixing its mistakes than writing it myself.”

Both can be telling the truth.

The problem is that neither statement is specific enough to be useful.

The more productive habit is to measure the work directly.

Take one small Java task. Implement it manually. Then implement the same task with AI assistance. Compare:

cycle time

prompt iterations

compile or test failures

review burden

number of corrections

confidence in correctness

That kind of comparison does something important: it replaces opinion with calibration.

You stop asking whether AI is “good” or “bad” in general. You start learning where it is cheap leverage and where it creates expensive uncertainty.

That is the beginning of an AI-native engineering mindset.

Human Judgment Did Not Get Less Important

If anything, it became more important.

When code becomes abundant, selectivity matters more. Review quality matters more. Taste matters more. System awareness matters more.

The engineer’s role shifts from pure producer toward:

evaluator

integrator

reviewer

constraint keeper

risk owner

That is not a downgrade. It is a more explicit version of what senior engineers were already doing.

The difference is that AI amplifies both good and bad discipline.

If your engineering habits are weak, AI can help you create more low-quality software faster.

If your engineering habits are strong, AI can remove drudge work and give you more time for the parts that actually require expertise.

The tool does not decide which of those outcomes you get. Your workflow does.

A Better First Question

Instead of asking:

“Can AI write this?”

Ask:

“What does AI change about the cost of producing, reviewing, and trusting this work?”

That is a better engineering question. It forces you to think about the whole lifecycle rather than the demo moment.

And that, more than any prompt trick or model comparison, is the mindset shift that matters.

Code is cheap now.

Judgment is not.

Trust is not.

And the teams that understand that early will build better systems than the teams that confuse generation with engineering.

If You Want to Turn This Into Practice

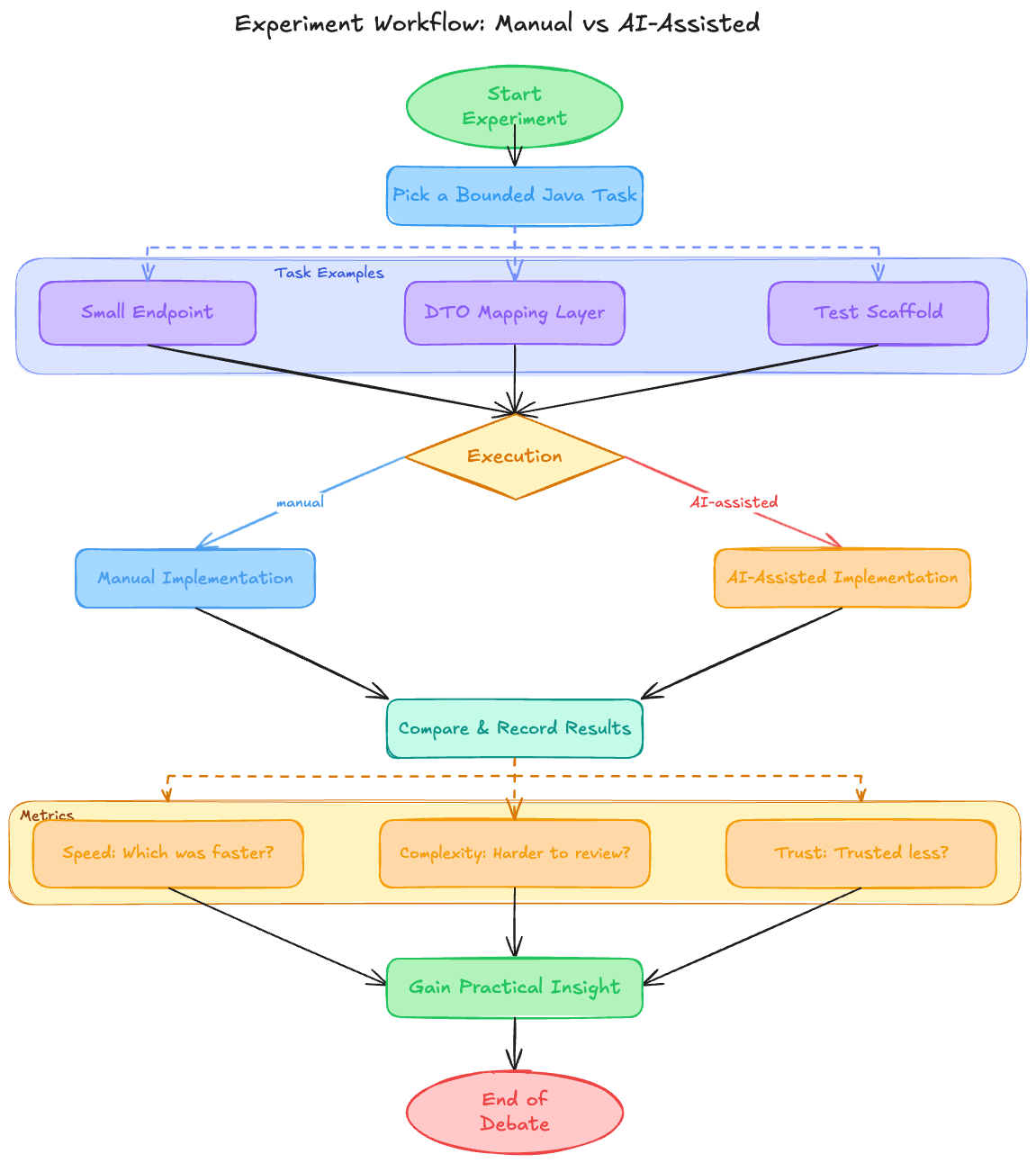

Start with one small experiment in your own Java workflow:

Pick a bounded task like a small endpoint, DTO mapping layer, or test scaffold.

Do it once manually and once with AI assistance.

Record what was actually faster, what was harder to review, and what you trusted less.

That simple exercise will teach you more than a month of abstract debate.

If you want a one-line summary of the whole argument, it is this:

AI has made code abundant. It has not made judgment optional.