Before You Ask AI to Code, Write a Better Spec

In AI-assisted development, ambiguity does not go away. It turns into code that looks plausible. One of the fastest ways to improve output is to refine what exists before implementation begins.

One of the easiest mistakes to make with AI coding tools is to think they reduce the need for a good spec.

In practice, they often increase it.

That sounds backwards at first. If a model can turn a vague product request into a controller, DTOs, validations, tests, and a service implementation in a few minutes, why spend more time writing down contracts, decisions, and requirements first?

Because AI is very good at filling in the blanks.

And that is exactly what makes vague software requirements dangerous.

AI Does Not Remove Ambiguity. It Amplifies It.

Give a human engineer an underspecified product brief, and you usually get friction.

They ask questions.

They hesitate.

They surface missing constraints.

They push back on hand-wavy requirements like “make it secure,” “return what the frontend needs,” or “just add a login endpoint quickly.”

Give that same brief to an AI system, and you often get something else: a confident answer.

The model will choose a token format. It will invent an error shape. It will decide what “useful error” means. It will make assumptions about validation, expiry, status codes, disabled users, lockouts, and audit behaviour.

Sometimes those assumptions will be reasonable.

That is the problem.

Reasonable is not the same as correct.

In AI-assisted development, ambiguity does not vanish. It turns into output that can look finished before it is actually right.

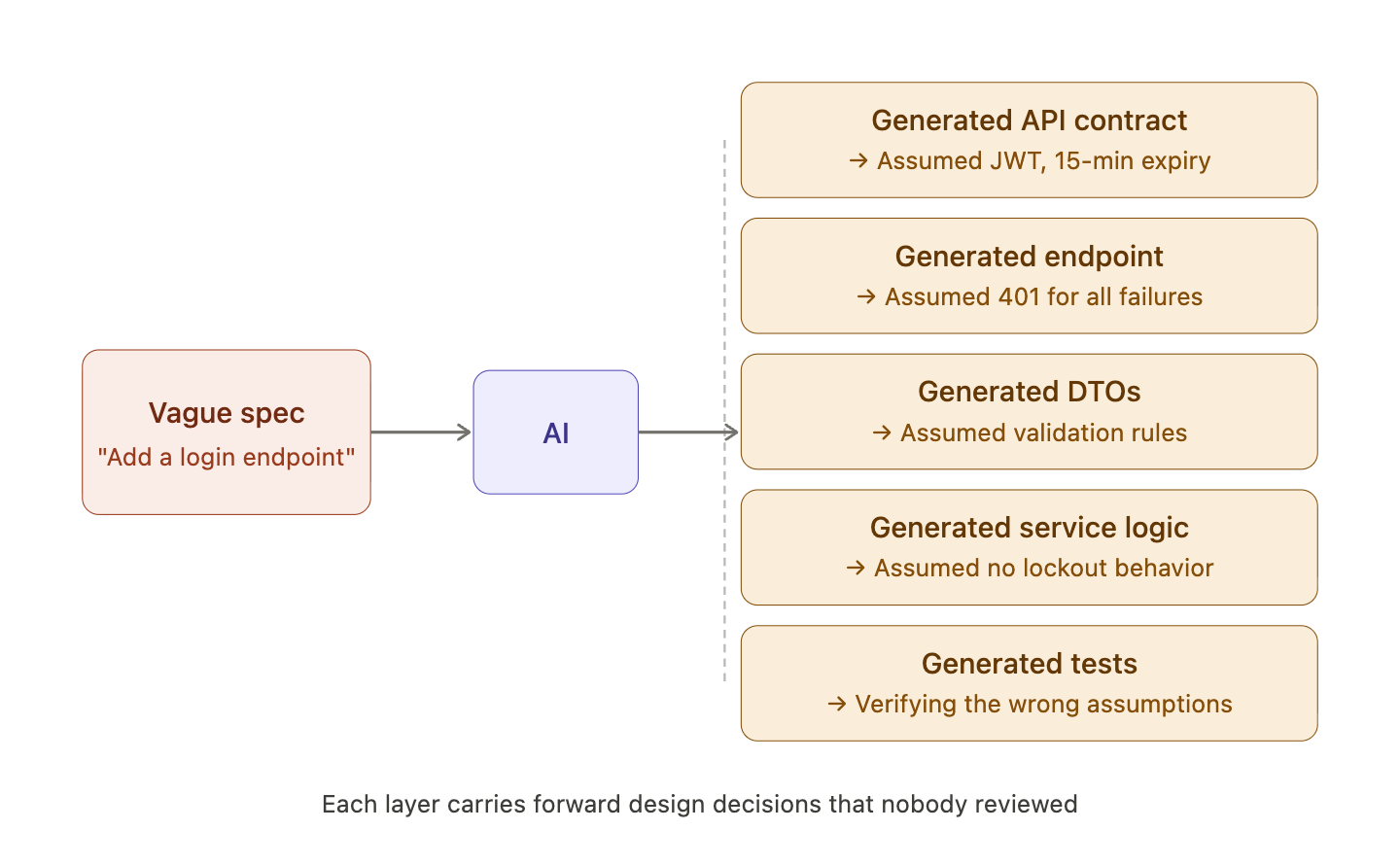

A single vague prompt can cascade into a whole stack of unreviewed decisions:

A Small Example: “Add a Login Endpoint”

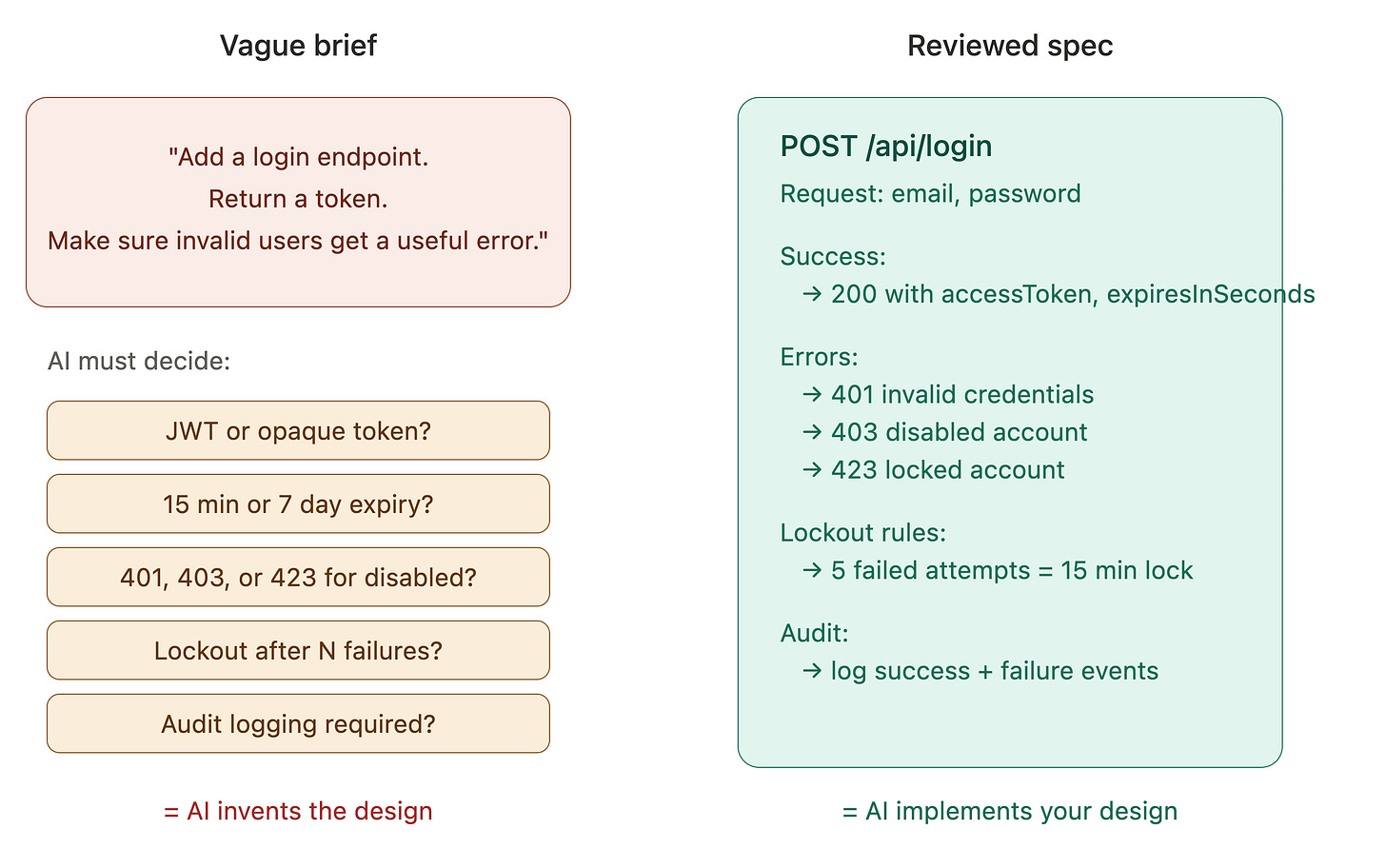

Imagine the brief is only this:

Add a login endpoint for the app. Return a token. Make sure invalid users get a useful error.

That sounds simple.

But it leaves critical decisions unstated:

Is the token a JWT or an opaque session token?

Does it expire in 15 minutes or 7 days?

Do disabled users return

403,423, or401?Does a locked account behave differently from a bad password?

Do repeated failures trigger a lockout?

Is login activity audited?

Ask an AI assistant to implement that brief directly, and it will usually make those choices for you. Some of those defaults may even look sensible. You may get clean Spring Boot code, DTOs, and tests.

But now the code is carrying design decisions that nobody actually reviewed as design decisions.

Now compare that with a slightly better input:

POST /api/loginrequest:

email,passwordsuccess:

200withaccessTokenandexpiresInSecondsinvalid credentials:

401disabled account:

403locked account:

423failed attempts increment, lock count

lock after 5 failed attempts for 15 minutes

successful and failed logins must be audited

That is still a small feature.

But it is a completely different task.

The AI is no longer being asked to invent behaviour. It is being asked to implement the reviewed behaviour.

This Is Why Specification Quality Matters More Now

Before AI-assisted coding became common, weak specs slowly caused downstream problems. A team might lose time in implementation, discover mismatches in testing, and eventually patch the gaps through conversation and rework.

Now that implementation is cheap, those same gaps travel faster.

A weak brief can become:

a generated API contract

a generated Spring Boot endpoint

generated DTOs and validations

generated tests that only verify the wrong assumptions

You do not just get one bad interpretation. You get a whole stack of them.

This is why specs matter more now. They are how you keep the model from making product and design decisions for you.

A good spec shapes the implementation.

A weak spec gives the model permission to improvise.

The Contract Should Exist Before the Code

One of the most useful habits for Java teams using AI is to move the contract earlier.

Before writing implementation, define:

request shape

response shape

validation rules

error behavior

status codes

boundaries of responsibility

This is where OpenAPI becomes much more than API documentation.

Used well, it becomes something that shapes the implementation instead of describing it after the fact.

That distinction matters.

If you ask AI to implement a login endpoint from a product brief, it will produce something plausible.

If you ask AI to implement a login endpoint from a reviewed OpenAPI contract, explicit failure cases, and a few architecture decisions, you have changed the task completely.

You are no longer asking it to invent the shape of the system.

You are asking it to stay inside clear boundaries.

That is a much safer use of the tool.

Architecture Decisions Should Not Stay Implicit

Specification is not just about fields and endpoints.

It is also about the decisions engineers often leave unstated because they feel “obvious.”

Questions like:

What token format are we using?

How long does it live?

What happens on repeated failed login attempts?

What gets audited?

Should locked and disabled users behave differently?

These are not implementation details. They are design decisions.

And this is where AI can get risky, because it will quietly make those decisions for you.

That is where lightweight ADRs become useful.

ADR stands for Architecture Decision Record: a short document that captures one important technical decision, why it was made, and the trade-off accepted.

An Architecture Decision Record is not bureaucracy. It is a simple way to stop hidden decisions from getting made by accident.

Even a short ADR forces clarity:

What decision was made

Why it was made

What alternatives existed

What tradeoff was accepted

That kind of explicitness matters even more when implementation can be generated in seconds.

Free-Form Prompts Are Weak Truth Sources

Another pattern worth changing is how teams treat prose.

Free-form product language is useful for communication, but it is a weak source of truth for implementation.

It is hard to compare.

It is hard to validate.

It is hard to tell whether the model extracted what you meant or what it preferred.

That is why structured requirements matter.

If you turn a brief into a typed requirement model, JSON schema, or some other structured format, you force ambiguity into the open. Missing rules become visible. Contradictions become visible. Invented assumptions become visible.

This is one of the most useful ways to use AI in engineering: not just to generate code, but to generate structured interpretations that people can inspect critically.

That is a much better role for the tool.

Instead of asking, “Can you build this?”

Ask, “What requirements do you think this implies?”

Then compare that output to your own interpretation.

That comparison is where the real value often lives.

Contract Testing Beats Prompt Memory

There is another benefit to this approach: the contract outlives the prompt.

Prompts are temporary. Chat history is inconsistent. Tool behavior changes. A model may follow your instructions closely one day and loosely the next.

Contracts and tests are different.

They are durable.

They can be reviewed.

They can fail.

That is why contract testing matters when AI is part of the workflow. Contract testing means checking that the implementation still behaves according to the agreed contract, especially around request shape, response shape, status codes, and error behavior.

For example, if the contract says `POST /api/login` returns `401` for invalid credentials, `403` for a disabled user, and `423` for a locked user, a contract test can verify those behaviors directly. If the generated implementation starts returning `401` for every failure case because the model simplified the logic, the contract test should fail immediately.

That gives you something more stable than “the model understood me last time.”

The prompt may start the work, but the contract should be the truth source.

If the implementation drifts, the contract should catch it.

If the model invents behavior, the contract should reject it.

If the brief was unclear, the contract-writing process should expose that before the code reaches production.

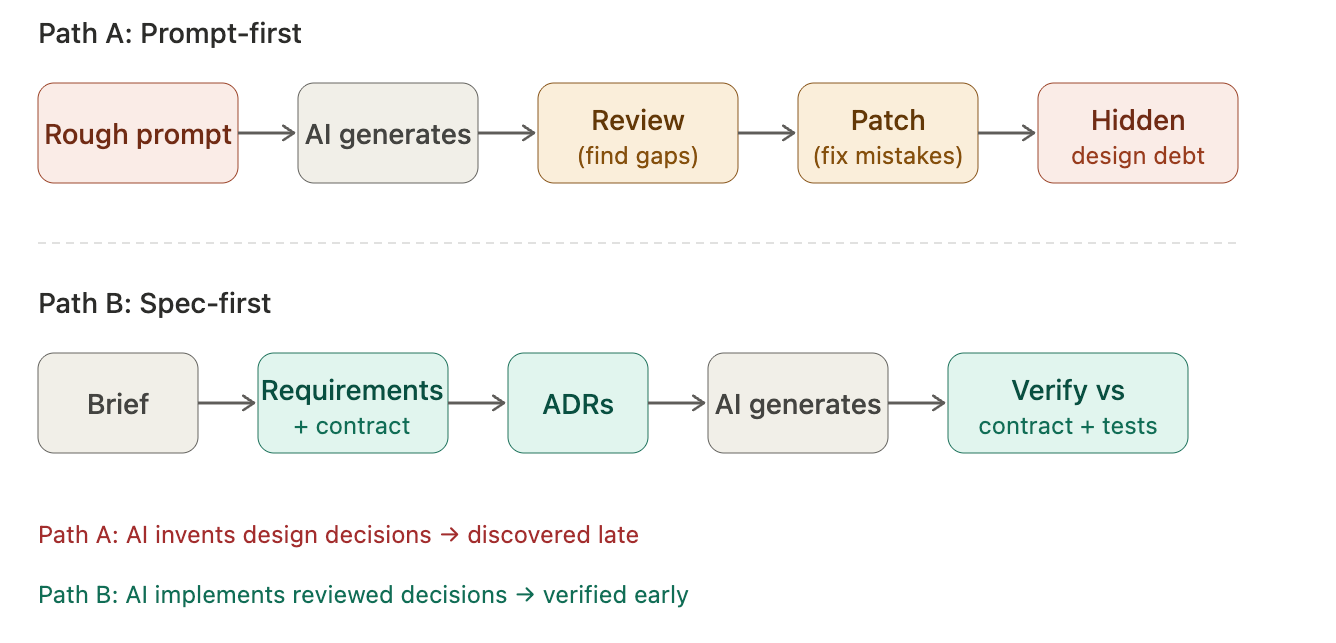

A Better Workflow for AI-Assisted Engineering

A lot of teams still use AI like this:

write a rough prompt

generate code

review what came back

patch the mistakes

That works for small, local tasks.

It breaks down quickly when the behavior matters.

A better workflow looks more like this:

start from the brief

extract and refine requirements

define the contract

record key architecture decisions

generate implementation against those artifacts

verify implementation against contract and tests

This is slower at the very beginning.

It is often much faster by the time you reach merge.

More importantly, it is safer.

It reduces the amount of hidden design work the model is doing without review.

What This Changes for Java Teams

For Java teams in particular, this approach fits pretty naturally.

Java tends to work well with explicit structure.

It works well with types, contracts, validation, and clear framework boundaries.

That means Java teams are in a good position to get value from AI without giving up control.

But only if they treat upstream artifacts seriously.

If you skip the spec step, AI will happily complete the design for you.

If you write the spec well, AI can speed up implementation instead of quietly taking over design decisions.

That is the difference.

The real leverage is not “AI writes code.”

The real leverage is “clear artifacts keep code generation grounded.”

A Better First Question

Before asking an AI assistant to implement a feature, ask:

What in this request is still ambiguous enough that the model will invent the answer?

That is usually the right starting point.

Because once the ambiguity is explicit, you can decide where it belongs:

in the API contract

in an ADR

in a typed requirements model

or back with the stakeholder for clarification

That is what spec-driven work looks like when AI is part of the process.

It is not more paperwork.

It is better upstream control.

If You Want to Try This in Practice

Pick one small feature request from your own backlog, especially one that sounds deceptively simple.

Before generating implementation:

Extract the requirements manually

Ask AI to extract them too

Compare the differences

Write a minimal contract and one decision record

Only then ask the AI to implement

That single exercise will tell you pretty quickly whether your real problem is implementation speed or spec quality.